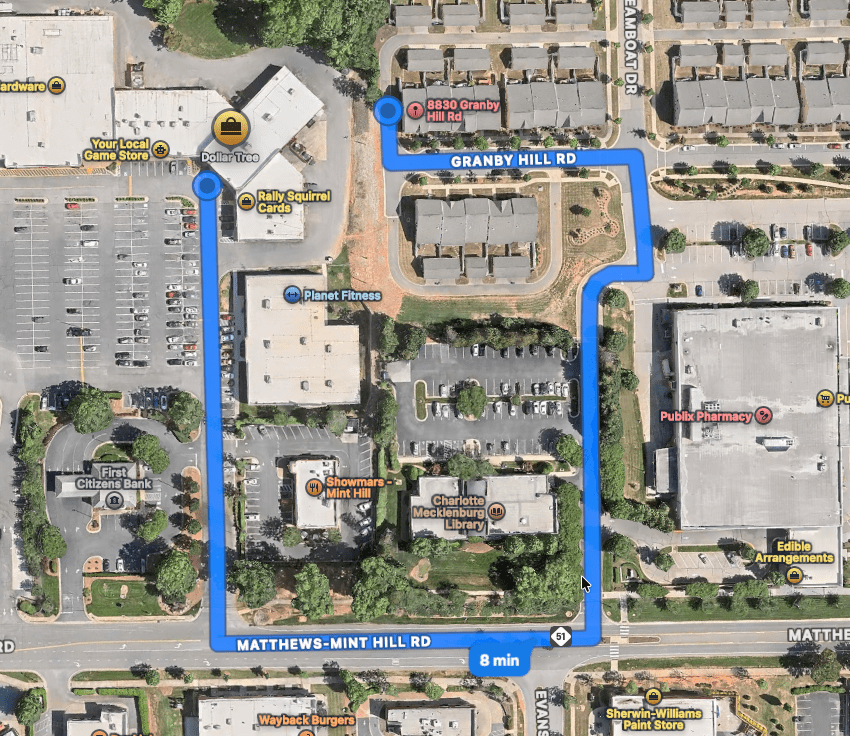

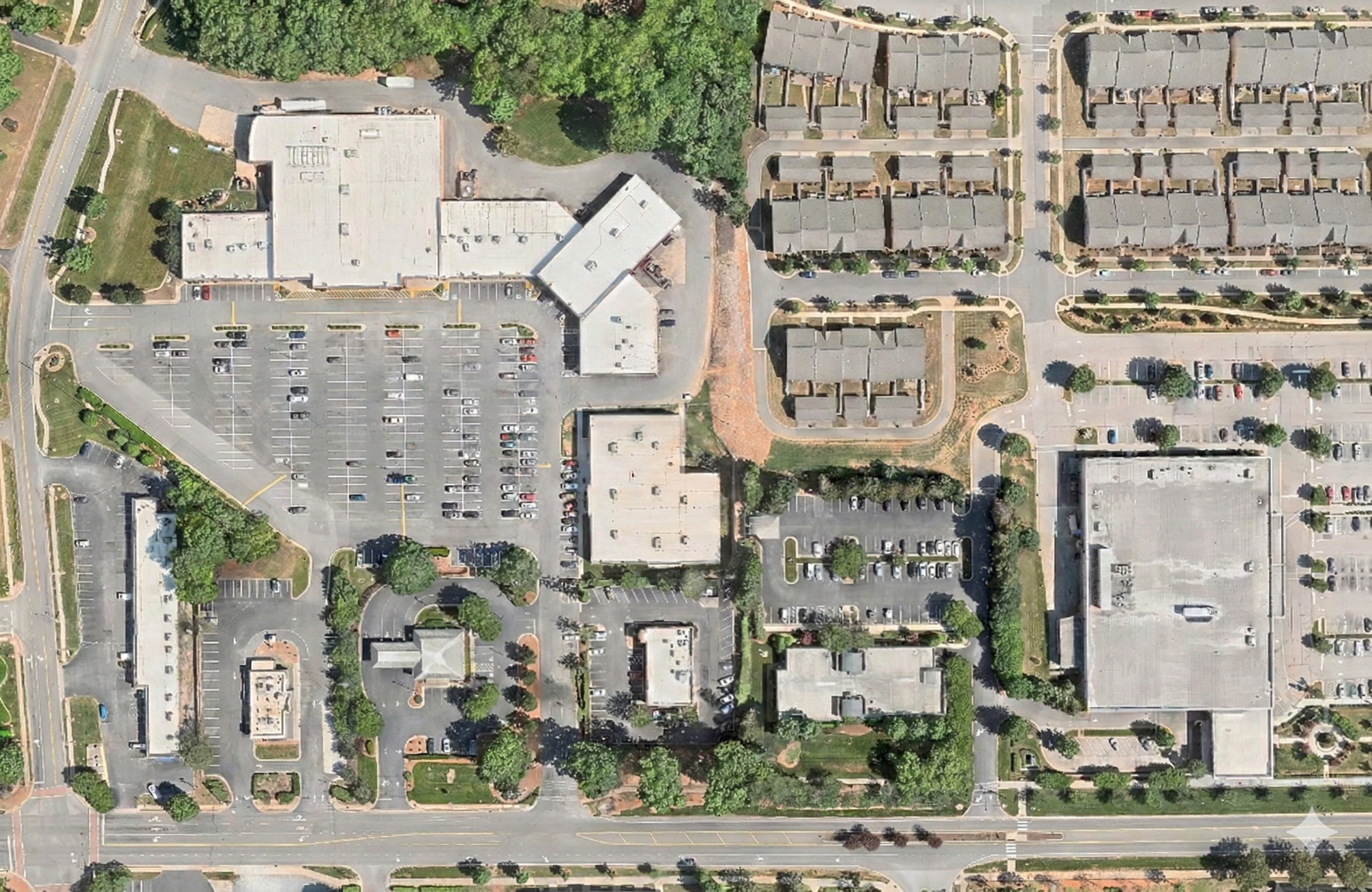

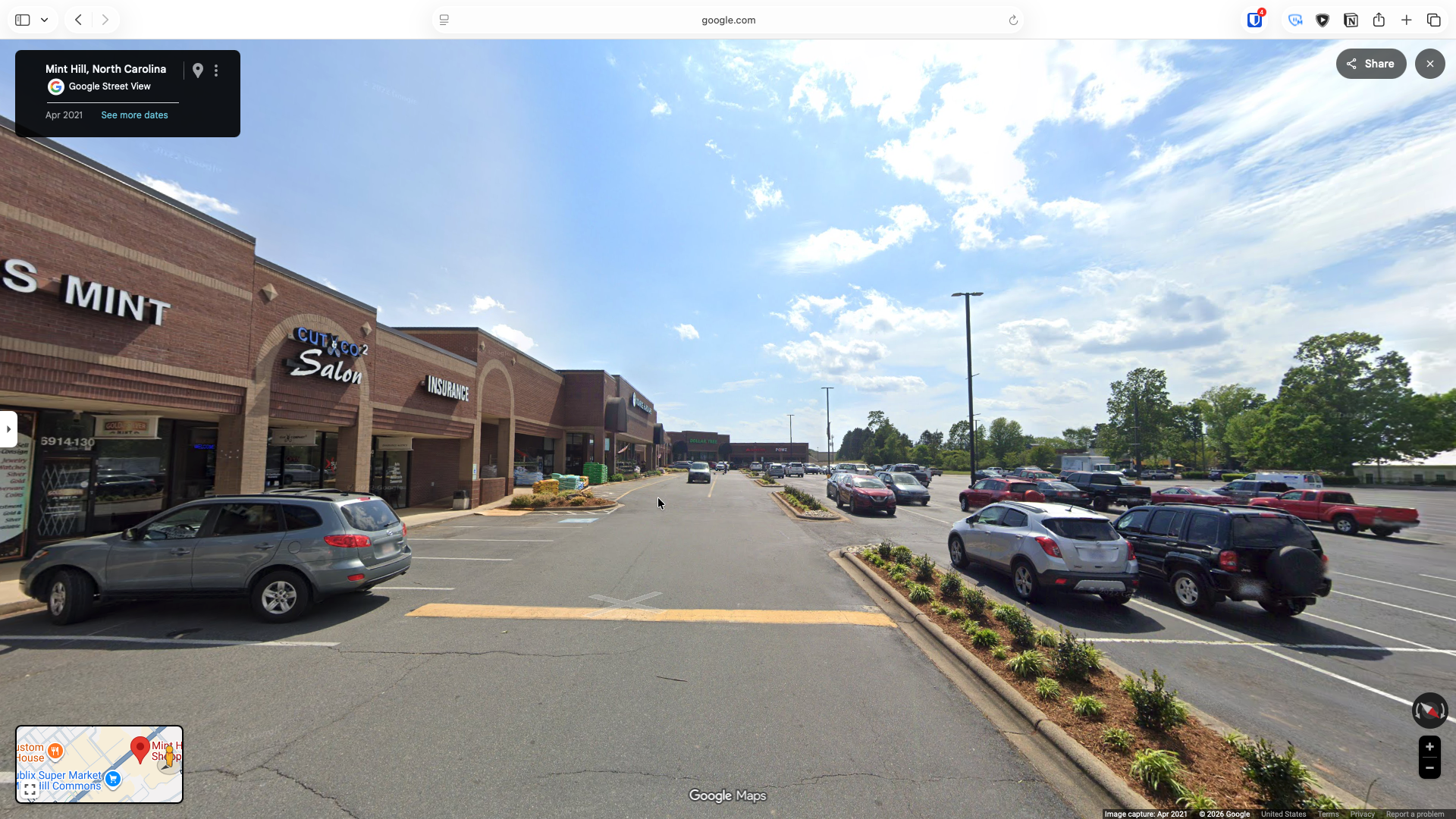

If you look at how many U.S. suburbs are built today, a clear pattern emerges. There’s the wide arterial road, often called a stroad. It’s usually six to eight lanes, built for speed. Along both sides sit strip malls, big-box stores, and standalone restaurants, each surrounded by large parking lots.

Branching off these roads are residential subdivisions. These neighborhoods are made up of winding streets and cul-de-sacs. They’re quiet and low-traffic by design, but they’re also difficult to navigate without a car. There are few direct paths, and no real reason to pass through unless you live there.

This layout reflects a simple assumption: everyone drives everywhere. But that assumption starts to break down once autonomous vehicles enter the picture.

The reason commercial areas have such large parking lots is straightforward. People drive from home, park, do what they need to do, then drive back. Every destination needs enough space to store all those cars at peak times.

Autonomous vehicles, especially shared robo-taxis, change that. Instead of parking, a car can drop you off and immediately leave to pick up someone else. The same vehicle can serve many people throughout the day. That makes parking far less necessary.

Once you don’t need acres of parking, that land becomes available for something else. A small pick-up and drop-off area can handle the same flow of people while taking up a fraction of the space.

The rest can be redeveloped. More shops, housing, parks, or public space. Instead of walking across a hot, empty parking lot, you step into a place designed for people. Buildings can line the street, provide shade, and create a more comfortable environment.

This also changes how transit works along these roads. Right now, buses often run infrequently and stop in places that feel hostile. You get off and face a long walk across a parking lot. There’s little shelter, a lot of noise, and constant traffic.

If those parking lots are replaced with actual development, the experience improves immediately. You step off the bus and you’re already close to where you want to go. There are buildings, shade, and activity right there. More people live and work along the corridor, which makes transit more useful. Higher ridership can support more frequent service and eventually better infrastructure like dedicated lanes or light rail.

These wide arterial roads already have the space for that shift. Some lanes can be repurposed for transit, wider sidewalks, or protected bike lanes without removing cars entirely.

Inside the subdivisions, a similar change becomes possible. Today, it’s hard to introduce small businesses into single-family neighborhoods, largely because of parking requirements. A small coffee shop, for example, would need enough parking for customers and staff, which often isn’t feasible.

If people arrive by autonomous vehicle, bike, or on foot, that requirement shrinks or disappears. A house could be converted into a small café, a barber shop, or a studio without needing a large parking area. These kinds of businesses could serve the immediate neighborhood instead of drawing large crowds from far away.

The street layout inside these neighborhoods can improve too. Cul-de-sacs are effective at limiting car traffic, which many residents like, but they also make walking inefficient. Two nearby points can require a long, indirect route.

You don’t have to redesign everything to fix that. Keeping the cul-de-sacs for cars while adding pedestrian and bike paths between them can create direct connections. What used to be a long walk becomes a short one. That makes it practical to walk or bike to nearby destinations within the neighborhood.

Taken together, these changes point to a different version of suburbia. Parking lots are replaced with housing, shops, and public space. Arterial roads become more balanced, supporting transit, walking, and biking alongside cars. Neighborhoods gain small-scale businesses. Walking and biking become more realistic options because distances shrink and routes improve.

People can still live in single-family homes. They can still use cars when they need to. But they’re no longer locked into one way of moving through the world, and the places they go feel better when they get there.

Autonomous vehicles don’t fix everything, but they remove one of the biggest constraints shaping suburban design: the need to store cars everywhere. Once that constraint is gone, a lot of new possibilities open up.